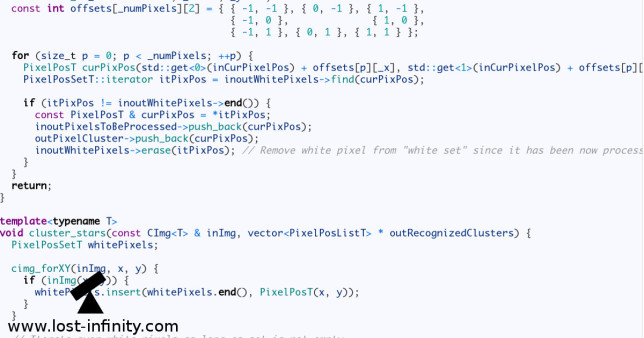

In this post I want to show a way of Milkyway image processing which does not require any commercial software product. The idea is to use rawtherapee, DeepSkyStacker and GIMP to develop, align and combine the frames to one final image.

Camera: Fujifilm X-T1, Exposure time: 25sec. per frame, ISO: 1250, Aperture: f/2.8, focal length: 18mm

The problem

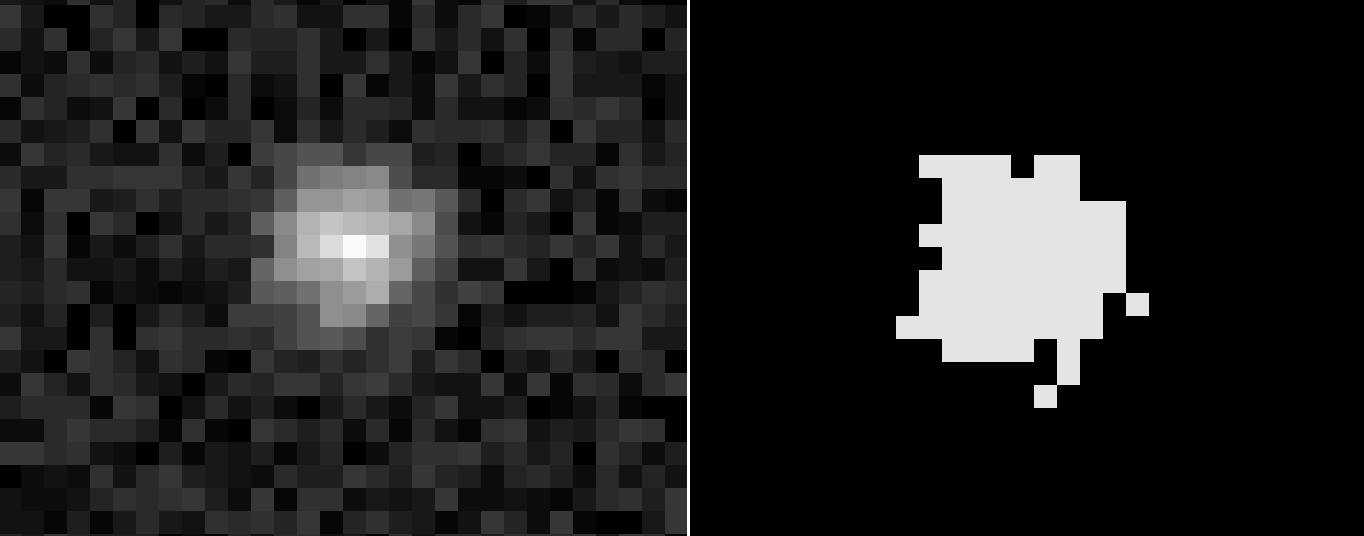

Quite often it is advantageous to use a high ISO value to get as much details as possible in the available exposure time (before the earth rotation becomes visible). On the other hand one probably does not want too much noise in the image. Therefore, the idea is to take multiple frames at a high ISO value and stack them later to reduce the noise. There is just one problem: The earth rotates and from frame to frame the stars are in different positions. Therefore an alignment of the frames before averaging is required. But then we get another problem: The foreground of each frame moves and so the resulting foreground gets fuzzy in the end. What to do?

Continue reading →